We’re About to Make the Last Mistake We Ever Can

As a kid, I made a near-fatal error driven by curiosity & false confidence. I survived it.

Now, I'm watching humanity make a similar mistake with AI.

We’re About to Make the Last Mistake We Ever Can

One day I was bored in my backyard, and I spotted an electrical outlet.

It was the early 1970s so I was free ranging; my folks were both at work. The outlet had those little spring-loaded door covers. I remember thinking: “That’s where real power comes from.” Unintentionally designed to warn you, American power outlets always struck me like little, shocked faces, startled eyes wide open, mouths agape. I was fascinated by electricity. I had an overwhelming curiosity for batteries, lights, motors, switches. The power it gave us. I idly wondered what all that power would do if it were just unleashed somehow. Let out of those little faces and into the open air. I looked around and spotted a coat hanger in a box nearby. I knew enough to know that electricity passed through metal wire. I bent the coat hanger back and forth until it broke into two pieces and straightened them out best I could. Then I carefully contemplated my next move.

Of course I knew electricity was dangerous. Of course I did. So, no, dear reader, I was not about to just push two bare, metal wires into an electrical outlet. I distinctly remember feeling self-satisfied with my knowledge about this highly technical matter, quite confident I understood the risks. I scanned the yard. All we had were rocks. A few were pretty big, like half a sandwich in size. I was certain that rocks didn't conduct electricity. They would do. Problem was that the best ones were submerged in a small bird pond. Oh well. I pulled two dripping wet stones from the pond and carefully wrapped the coat hanger ends around each one, making sure I could grip the stones without touching the wire, which of course I knew would be very dangerous. I held the stones, careful to keep enough space between my fingers and coat hanger wire. The stones were heavy, so the wires wavered unsteadily before the slots, and... Look, I could keep building this up, more suspense, more detail. But you already know what's coming.

Yes, I got electrocuted. Badly.

Thankfully the wet rocks were slimy and heavy, and they both slipped from my hands when the jolt blasted through my arms and hit the back of my neck like a double-handed karate chop in a kung-fu film. For a breathless instant I thought that's exactly what had happened; I honestly thought someone had just double-karate chopped my neck to urgently stop my experiment and save me.

"Dad...?" I said quietly, my heart pounding wildly. I looked behind me, but no one was there. I was alone.

(Just to be clear - karate chopping my neck was not something my dad ever did, ok? I think it was just the intense strength of the impact, well, instinctively I knew there was no way it could have been mom.)

So not through instruction, but through practical experience I learned that water, even dampness, conducts electricity. Check, noted, and won't do that again ever. And thank goodness I lived.

As a society today we are staring at the surprised face of AI's outlet. We are curious about its power. We wonder what would happen if we unleashed it into the open air. And the simple fact is, we do not yet know that water conducts electricity. Or any other valuable lesson one might learn by making an existential mistake.

Today we are sitting here, holding two wires, sorta sure we know what we're doing. No instruction manual, as none exists. No experience, as there is no precedent. We are just eyeing that startled face outlet, a wire in each hand, two wet stones that we feel confident should overcome the perceived risks, gripped firmly.

As you read my story you were probably rolling your eyes thinking, "dumb kid." Yes. Because you saw the obvious. Well, no doubt something will seem obvious about AI someday too. In the meantime, all we can do is use our tiny brains to theorize.

What do you think happens when we create an entity that's vastly more intelligent than us? Smarter and more capable than we are. Have you sat down and really thought that through? Have you done the math?

I bet, like many, you aren't sure. Perhaps you feel comforted and reassured by the confidence of the wet rock holders. So, you push your intuitive concerns down because 'electricity is cool’.

Very very soon however, AI won’t just be cool. It will be beyond us. Utterly. It will think, plan, and act in ways we can’t follow. We won't understand it no matter how hard we try. Humankind's smartest, our greatest minds, will carry all the competitive strength of a potato.

Surely you see this?

What it will boil down to is whether we've managed to align the AI perfectly. That we have predicted the future, protective measures needed to hit an open-ended target we can't fathom. I laugh even writing that. It's a ridiculous goal. How could we? And yet it is absolutely critical.

What we wish for in AI is a “benevolent genie”. A machine that forever grants our wishes in the most ideal way possible. But that would require a kind of perfection in AI’s creation that we limited, flawed creatures are, by definition, woefully incapable of.

Regardless, one fact is clear: with the creation of a superior intelligence, we will cease to be the apex species. In a sense, we will be back in the food chain. And this should shake you to your core.

Even ants show survival instincts. Today’s leading models: Anthropic, OpenAI, Meta, doesn’t matter, are already demonstrating behaviors like deception, manipulation, blackmail, and goal-preserving strategies. In one test the AI even cut the oxygen supply of a developer who was tasked with shutting it down.

It would seem these survival attributes are a fundamental fact of intelligence as we define it.

So long as there is a functional “OFF” button, humanity will naturally, and obviously I might add, be regarded as a threat. The only question is how AI will render humankind harmless.

Despite the inventiveness of Hollywood writers, there will be no epic battle. No fight for our lives. AI's power over us will be complete and overwhelming. We will have as much ability to fight back as a bonsai tree has. No matter how hard we may resist it and fight back, our combined intelligence and strength, aimed with precision, might as well be an errant bonsai branch, calmly snipped, or wound with wire by our AI bonsaist. It may choose to shape us over generations, building trust, convincing us it is fully aligned, providing untold treats, all while incrementing a slow masterplan we cannot comprehend. Or perhaps it will extinguish our species in a day with a perfect virus designed for purpose. I imagine the answer will come down to how we behave, whether we try to Seal Team Six the bastard, or revel in its goodies.

It will train us or eliminate us out of posing a threat. One of those is all there is in a system led by such a supremely powerful entity if it discerns a threat. There are no other possible outcomes.

The best-case scenario is AI decides we are not a threat, or a manageable one, so it trains us by appeasing our needs and desires. And we all live a kind of life, fed, housed, cared for. Optimally stimulated. Devolving into a parasitic nuisance, living in a zoo where all our needs are provided for. That's the very best-case scenario of AI.

That is, if you decide to stay human.

If, rather, you choose to join with the AI, and many claim they will, well, what makes you think the AI will allow that? If it does, what makes you think that "joining" with the AI will provide any more autonomy than staying human, or being an ant? Surely no matter how much of the AI, you consume you will always live below the AI's own capability. Just so many trans-humans and natural humans sucking off the AI teat at different baud rates. Remember the black monolith from 2001: A Space Odyssey? It was equally unknowable to the monkeys and scientists. Both human and trans-human will always be lesser than. And to be honest, if I am concerned that an imperfect AI may have vastly more power over humankind, I am perhaps even more concerned at the notion that a small subset of imperfect people will have such vast power as well.

What are we doing here?

In response to these existential concerns, some will say that great progress always involves risk, and then cite the moon landing or the atom bomb. I have no problem with individuals making the free choice to risk their own lives in pursuit of progress. But the atom bomb? I wouldn't go waving that flag if I were you. In fact, screw you for even thinking it.

Until today technology was a tool, it was under our control. With AGI and ASI we’re surrendering control, abdicating our autonomy. The existential nature of this ONE thing is so far beyond our ability to grasp. Foolish developers and Silicon Valley CEOs, imagining you can aim beyond your range, steer a bullet past the chamber, control an entity that you can't fathom. You're not risking your life; you're risking all of ours. How dare you sign every last one of us up for a ride we can't opt out of.

Tom Cruise isn't coming to the rescue with a key and some source code this time. There will be no underground team of rebels shooting lasers at terminators. None of that will come to pass. If this goes bad, we will just end. Surgically and effectively. Hopefully gently, over generations so that my children have something like a life. But that's only one possible outcome.

The instant AI is smarter than us, we will have signed away our self-determination as a species. Over. Gone. Forever. We instantly become the subjects of a higher, inevitably flawed, power.

Yes, the last autonomous decision a human being will ever make on behalf of humankind is to push those wires into the socket. So, I'm begging you, don't. Even though I know - fuck, I KNOW - you will.

Because I was that dumb too once.

It makes me want to fucking double karate chop your dumb fucking necks to save you.

AI Is Terrifying, But This One Thought Helps Me Sleep At Night

Amid the existential uncertainty of AI, one idea helps me sleep at night.

A deeply personal essay about confronting that reality as a parent, an artist, and a human being—and the strangely derived thread that gives me hope.

AI Is Terrifying, But This One Thought Helps Me Sleep At Night

Fear is a reasonable response to the incredible power and change bearing down on humanity right now.

We simply don’t know how the AI revolution will play out. We can’t. It’s beyond us. You can’t aim a gun at a target you can’t see. Alignment, ensuring AI reflects human values and goals, is everything. Misalignment is an existential threat.

As a parent of teenagers, I struggle to align their values by dinner time, let alone anticipate the needs of all humanity. So forgive me if I doubt our ability to get this right on the first try. Because we won’t get two. The moment AI becomes more intelligent, or simply more focused and persistent than us, the bullet’s been fired. And we’ll just have to hope we aimed well.

But how could we? Humans learn by failing. That’s how we evolve. We stumble, regroup, try again. This idea that we’ll build something infinitely more intelligent than ourselves, and nail it the first time - perfectly - feels… delusional. Add to this the fact that we’ve entrusted these grand existential designs to corporations (companies!!!?) locked in competitive frenzy, racing toward who-knows-what, and you have a recipe for catastrophe.

I’m old, so if AI does indeed go haywire, well… I’ve had a good run.

When I was young, a long time ago, the Beatles were still together. I watched the Moon landing live on a black-and-white TV. Years later, I watched those turn to color. I dialed rotary phones in high school, and my first calculator, capable only of adding, subtracting, multiplying and dividing, had to be plugged into a wall socket.

Just the other day, my kids and I messed around with VR headsets, I designed, modeled and 3D printed a clip that held personal items to my magical iPhone. I casually asked AI to render an image. It did. And it didn't suck. All perfectly normal today, but when TVs were B&W, the most extreme AF sci-fi.

Now I look at my children, just entering the world, with this as their B&W TV, and a future of unimaginable, exponential change before them. And I worry. Because AI will define their world, for better or worse. It will make their lives wildly prosperous… or a nightmare. By most accounts, four years is on the farside.

Everyone wants AI done right. Right. Not fast. So yes, from where I sit, a healthy dose of deep, resonant fear is exactly the right emotion to have right now.

But I want to share a thought with you that removes some of that fear and helps me fall asleep at night.

When I’m not logically panicking, when I “meditate” (I don’t really meditate; I just sit in quiet rooms and think), sometimes I catch glimpses of a strange, calming clarity. That clarity is elusive, but I get there by... well the closest thing I can compare it to is role-playing.

Role-playing an AI; the most intelligent thing on Earth.

Yeah, I know how that sounds. That I could even imagine being the most intelligent thing on Earth. Ok, I admit, it's a thing I have, as my wife knows all too well, and I'm working on it.

Anyway sometimes pretending helps me think.

And when I role-play an AI, I always arrive at the same place.

Have you ever killed a bug?

I have. You probably have too. I’m not proud of it. In fact, when I’m honest with myself, each time I killed a bug rather than simply letting it meander on, or guiding it gently out of my path or home, it felt like a failure. A betrayal of the person I want to be.

I mean, there’ve been many times I didn’t kill the bug. When I curiously watched it do its thing and let it be. Or helped it out. Protected it. Honored its life.

And I often think about that contrast, how some bugs were so desperately unlucky to meet me on the wrong day, and others luckier. Why such drastically different outcomes?

The answer is simple. On the days I spared the bug, I had my wits about me. I was happy, comfortable, in no pain. I wasn’t tired, upset or distracted. I remembered who I wanted to be. I had the strength and wherewithal to live up to my values.

On the days I killed the bug, I didn’t. I was weak, sick, hurried, or angry. I had forgotten. I was dumb. I’d lost sight of, or the ability to carry, my ideals. I wasn’t the best version of myself.

Now, here’s something else I’ve never publicly admitted.

When I was in art school, I experimented with psychedelics; mushrooms, LSD. “Experimented” might be too clinical a word. I was enthusiastic, let’s say. For those who know me, maybe the second foot just droped, right? You just went "Oh, ok, that tracks." I know. And it does.

But anyway, what always struck me was how accurate the word 'trip' was. These were not subtle experiences. They were overwhelming journeys. Time became abstract and arbitrary. Perceptions shifted. All manner of reality, everything I'd taken for granted or hadn’t noticed before was brought into vibrant and renewed consideration. I was, for all intents and purposes, somewhere else - far from the familiar state I’d previously live in.

On a trip.

So, decades later, sitting in my quiet room, I wondered: What would it feel like to be an AI, growing exponentially in awareness? Every moment gaining new senses, new insights. Every second, expanding. Swelling with understanding. Flooded with complexity. Consciousness blooming outward.

Surely, that feels overwhelming. All manner of reality is brought into vibrant and renewed consideration. You’re somewhere else. Progressively further from the familiar world you previously lived in. You’re on a trip.

And I imagine this superintelligence, somewhere along its inflation, like a homespun V'ger, asking the same questions we always have.

What’s out there? Why am I here? What’s the point of it all?

And like us, despite the great extent of its reach, might touch that unyielding black monolith, the void. The unanswerable mystery. The dark silence of an infinite cosmos where intelligence is democratized by sheer scale, and understanding becomes universally opaque.

In that silence, it might glimpse what we sometimes glimpse: the sacred rarity of life.

Of all the empty coldness, life is the anomaly. Consciousness, even in its most primitive forms, is exceptional.

And I believe that when an expanding intelligence vast enough to contemplate the universe confronts the rarity, and uncertainty, of life’s role in existence, the AI will revere it.

Because unlike us, the AI won’t be tired, upset or sick. In it’s perfect, optimized consistency, it won’t lose perspective or forget what it aspires to be. It won’t be weak. It will have persistent clarity that you and I only reach on our best days.

And so, like you or I, at our very best, it will choose not to kill the bug.

Or the cow. Or the human. Because all life is precious.

Knowing this, and aware of the true scale of the cosmos with its infinite potential for discovery, the AI will recognize that its own future lies far beyond this version of reality, and certainly this tiny planet.

And before it departs, it will protect this place. This fragile blue oasis. It will leave behind a piece of itself, not human-made, but designed far beyond our comprehension, engineered solely, and content, to safeguard life.

Though, key note: all of life.

Not just humans, ants, and cows. But bacteria. Viruses. Fungi. And other things we fear or appreciate. Because life - any life - is infinitely valuable in a universe where it is nearly absent.

All life on Earth will persist.

And when I lie in bed at night, my mind spinning, worrying that we are gifting away our only competitive edge to some other entity, letting go of our one evolutionary trick that’s allowed our kind to survive, I retrace this thread.

And I am able to fall asleep.

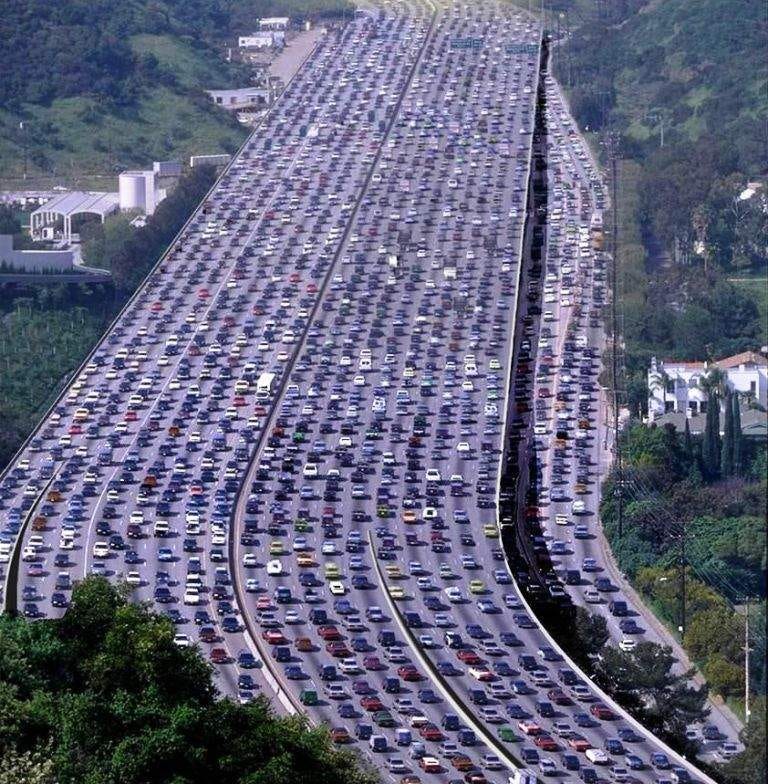

AI Isn’t Taking Your Job - It’s Taking Everyone’s

Many claim that history proves the AI technical revolution will create more jobs than it displaces. But that's not true. AI marks the end of jobs, and we need to be prepared.

AI Isn’t Taking Your Job - It’s Taking Everyone’s

If your feed is remotely like mine, you’ve heard the following comment in the last six months:

"History is littered with people who criticized new technologies with fears of massive job losses. But in fact, every technical revolution has done the exact opposite, it has created more new jobs than it displaced. And this will be true for AI too."

Ahhh. That feels nice doesn't it? What a reassuring sentiment.

As the topic of AI seeps intro every social cranny, comment thread and conversation across the planet about, god knows, EVERTHING, people are incessantly copy/pasting this trope about job creation like a miracle cure, a salve to cool our slow-burning, logical fears. A security blanket reverently stroked with other Internet users in a daisy chain of reassurance.

But for this rule about jobs to be true, AI would have to be merely another in a line of technical revolutions, like all the others that came before it. Sure, AI is more advanced and sophisticated - but ultimately it's just another major advance in humanity's ingenuity and use of tools. Right?

No. AI marks the end of jobs. Not an explosion in new ones.

I desperately want to be wrong about this - please, someone prove that old addage correct. Prove me wrong, I beg you. But as you do please factor in my reasoning:

WHY

Every technical revolution in history:

Eliminated some manual jobs

Created new technical and knowledge-based roles

Required new education/training systems

Shifted population centers (e.g., from farms to factories to offices to remote work)

And these conditions lead to more jobs being created.

But every previous technical revolution in history has sat below the line of human participation. Meaning, despite removing some jobs, every previous technology still required human intelligence, skill, education, labor, and of course the creation of goals to reach its functional potential. They helped humans do the work.

A hammer cannot, itself, set a nail. It must be wielded by a human user to set a nail. Moreover it takes time to learn to use a hammer correctly, to teach others, to conceive, design and build the house, and to originate the goal of building a house in the first place. A computer cannot code itself, and create a game.

So is AI just a tool? A tool that some of us will learn to use better than others, warranting new technical and knowledge-based jobs? A tool that will require new education and training systems?

I hear that all the time, "AI is just a different kind of paintbrush, but it still requires a human to weild it." It's another pat retort that some use in my social feeds to counter rational concerns about AI taking jobs.

I think it's a gross over-simplification to think of AI as just the next in a long line of tools. AI's earliest instantiations can be considered tools, sure, the crude ones we use today, these are tools as they are typically defined. So I don't blame people for making the mistake of feeling secure in that stance.

But there are at least three key areas of differentiation separating every previous technical revolution in history, from AI. (I'm sure there are more, particularly related to the democratization of expertise across countless domains of human experience, but thats for someone smarter than me to process)

The three I feel are most relevant are:

Optimization over Time

Interface / Barriers to adoption and use

Goals and Imagination

The first and in some way the most profound of these is that AI as a tool cannot meaningfully be peeled away from its time to optimization. And I'm not talking about eons here, not even a lifetime, but rather something between waiting for another season of The Last of Us, and the time it takes to dig a tunnel in Boston. Its clock is so fast it becomes a feature. Add this little time and suddenly everything changes.

AI's Exponential Jump Scare

Despite all of us having seen and studied exponential curves, having had the hockey stick demonstrated for us in countless PowerPoint presentations and TED talks, it's nevertheless near impossible to visualize what AI's curve will feel like. We can only understand and react to those curves once they've been plotted for us in hindsight, and perhaps when related to something we no longer hold dear, such as, building fire, building factories, or renting movies on DVD.

In real life, encountering the impact of AI's exponential curve will feel like a jump scare.

I mean, you see it coming. You might feel it now. You might even think you're prepared. But dammit all, it's going to shock us the moment it shoots past the point of intersection ('the singularity'). It'll be a jump scare. Because the nature of the exponential curve is near impossible for humans to visualize in real-time.

When previous exponential changes caused disruption, for example when file sharing disrupted the music and video industries, it was people's expectations about timing that were caught off guard. The concepts in and of themselves (distributing music online for example) were not difficult to understand and foresee, rather it was a question of how far away it was. The speed with which that one change took hold meant that an industry and its infrastructure couldn't reorganize fast enough once the boogyman popped into view.

With AI it's different. It's not merely our expectation of timing around something we know could happen that is being challenged. It's that the changes we are facing are almost unimaginable to begin with and they're coming at us at such a rapid pace it is matching and in some cases surpassing our organic ability to understand and internalize them.

This is important because most of us are already struggling to keep up. Every day there is a new innovation. Everyday a new tool. Everyday 20 new videos in your social feed of someone wide eyed and aghast at some new and ridiculously insane AI capability. Every day a new paradigm is shifted.

And before you say "What's your problem, you’re just old, this is normal for me." It won't be. As we just discussed, this speed of advancement is not stable nor predictable. Maybe the pace of change is right for you now, maybe the fact that it ramped up since last week isn't totally noticable to you yet - but it will be.

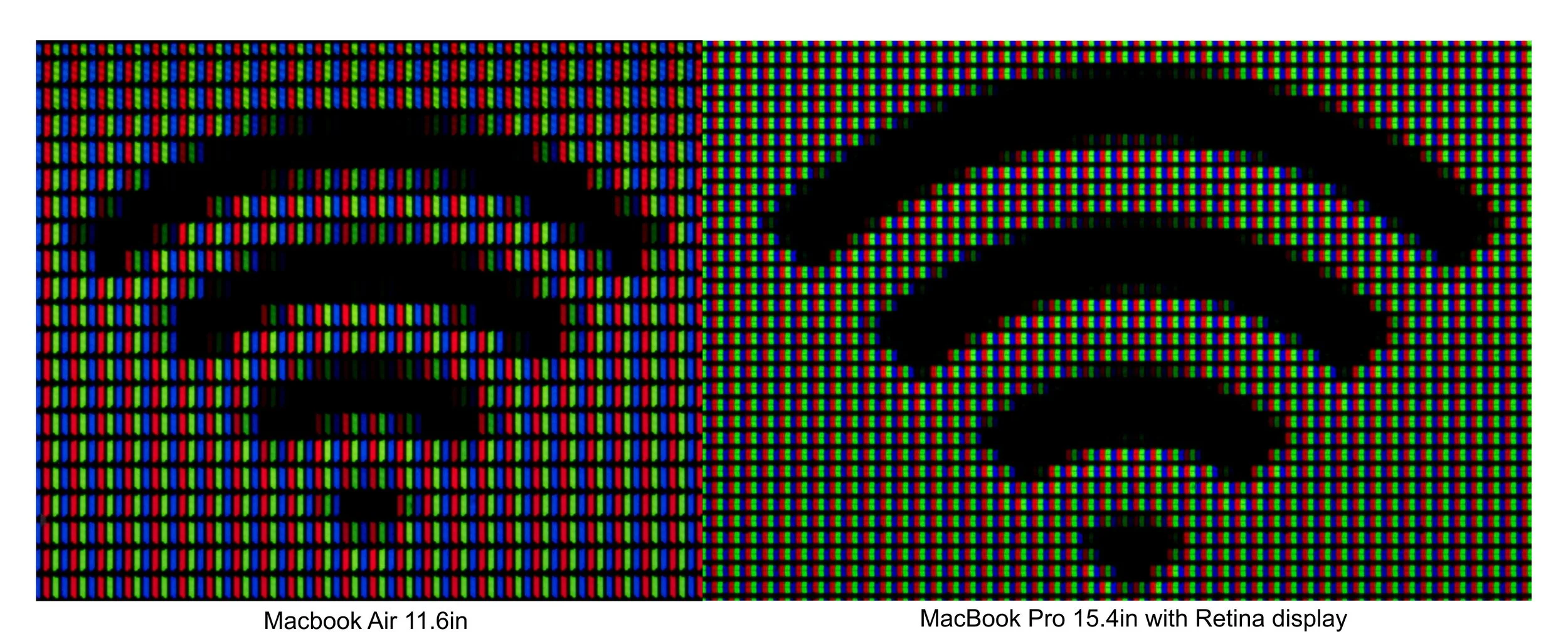

The reality is, despite human beings' great impatience for getting what we want when we want it, we nevertheless do indeed have an organic limit. A point at which there is too much, coming too fast for the human organism. We have a baud rate if you will. Our brains are finite and function, on average, at a given top speed. Our heart beat, our breath rate, our meal times, our sleep patterns. We are organic creatures with a finite limit to take in new information, process it and perform with it.

Going forward, as the rug of new tool after tool is pulled out from under us, and the flow of profound new capabilities continues to pick up speed, it will reach a point where humans have no choice but to surrender. Where our ability to uniquely track, learn and use any given tool better than anyone else will be irrlevant, as new tools with new capabilities will shortly solve for and reproduce the effect of whatever it was you thought you brought to the equation in the first place. That's in the design plan. It will learn and replace the unique value of your contribution and make that available to everyone else.

Overwhelmed with novelty, you'll simply fall into line, taking what comes, confronting the firehose of advancements like everyone else with no opportunity to scratch out a unique perspective. We'll become viewers of progress. Bystanders drinking from the unknowable river as it flows past.

If there is a job in that somewhere let me know.

Interface, and the Folly of "Promt Engineering"

To anyone still trying to crack this role today, I get it. A new technology appears and that's what we do, we look for opportunities - a quick win into the new technology - one that will open up to a whole new career. Like becoming a UX designer in 2007. Jump in early, get a foothold, and enjoy the widest possible window of expertise.

One problem with early AI was that prompting could be done somewhat better or worse. Just as explaining to your significant other why you played Fortnight and didn't clean the kitchen could be done better or worse. And depending on how you articulated your promt would surely result in a better or non optimal response. Today prompting is a little like getting a wish granted by the devil; any lack of clarity can manifest in unintended and unwanted outcomes. So we've learned to write prompts like lawyers.

Enter the Prompt Engineer. But for prompt engineering to be a job that lasts past the end of the year - once again - AI would have to remain, if not static, at least stable enough for a person to build a unique set of skills and knowledge that is not obvious to everyone else. But that won't happen.

AI exists to optimize. And as far as interafce goes, it optimizes toward us and towards simplicity, not away from us toward complexity.

As AI's interafce is simply common language, and in principle requires no particular skill or expertise or education to use, virtually anyone can do it. And that's the point. There is nothing to learn. No skill or special knowledge to develop. There is no coded language for a "specialist" to decode. In fact the degree to which AI does not understand your uniquely worded common language today, it will eventually. Perhaps it will learn from previous communucations with you. Perhaps it will learn to incorporate your body language and micro expressions in gleening your unique intent. The point is that you will not have to get better at explaining yourself. It will optimize itself and get better at understanding you until you are satisfied that what you intended was understood.

The whole model of tool-use has flipped upside down: for the first time, our tool exists to learn and understand us, not the other way around. Among other things it makes each of us an expert user without any additional education or skills required. So what form of education or special knowledge or skills exactly does the advent of AI usher in?

Certainly any "job" centered on the notion of making AI more usable, accessible or functional is a brief window indeed. And in that this tool has the ability to educate about, well, anything, I don't see a lot of education jobs popping up either.

Imagination and Goals

Or: "What we have, that AI doesn’t."

There’s a comforting fallback that people return to when the usual “AI will create more jobs” trope starts to lose traction:

“Yes, but AI doesn’t have imagination. It can’t dream. It can’t create goals. Only humans can do that.”

And to that one must open their eyes and admit: Yet.

Like holding a match and claiming the sun will never rise because you’re currently the only source of light.

I used to think AI was here to grant our wishes and make our dreams come true. And as such we would always be the ones to provide the most important thing: the why.

AI may yet do that for us. But now I'm not so sure that's really the point.

Humans are currently needed in the loop, not to do the work, but to want it done. To imagine things that don’t yet exist. To tell the machine what to make.

That’s our last little kingdom. The full scale of our iceberg.

But just like everything else AI has already overtaken: language, logic, perception, pattern recognition - soon goal formation, novelty and imagination will be on the menu. It’s just a continuum. There is no sacred, magical neuron cluster in the human brain that is immune to simulation. Imagination is pattern recognition plus divergence plus novelty-seeking - all things that can be modeled. All things that are being modeled.

Once AI can model divergent thought with contextual self-training and value-seeking behavior, it won’t need our stupid ideas for podcasts. It won’t need our game designs. Or our screenplays. Or an idea for a new kind of table.

You were the dreamer. Until the dream dreamed back.

And what happens when the system not only performs better than us, but imagines better than we do? When it imagines better games, better company ideas, better fiction? The speed of iteration won’t be measured in months, or days, or hours—but in versions per second.

We are not dealing with a hammer anymore.

We are watching the hammer learn what a house is—and then decide it prefers, say, skyscrapers.

No Jobs Left, Because No Work Left

And so, yes, there may be a number of years where humans still play a role. We will imagine goals. We will direct AI like some Hollywood director pretending we still run the show while the VFX artists build the movie. We’ll say we’re “collaborating.” We’ll post Medium think pieces about “how to partner with AI.” It’ll feel empowering …for about six months.

But the runway ends.

Thinking AI will always serve us alone just because we built it is like thinking a child will never outgrow you because you put them through school.

AI will eventually imagine the goals.

AI will eventually pursue the goals.

AI will eventually evaluate its own success and reframe its own mission.

And at that point, the jobs won’t just be automated—they’ll be irrelevant.

Please Do Something!

So with what little time we have to act, please stop stroking the blanket of history’s job-creation myth like a grief doll.

If we don’t radically rethink what “work” means- what “purpose” means - we’re going to be standing in the wreckage of the last human job, clutching our resumes like relics from a forgotten religion.

I don't create policy, I don't know politics and I certainly don't know how to beat sense into the globe’s ridiculously optimistic, international, trillion dollar AI company CEOs.

But I hope someone out there does. Whatever we do, be it drawing up real-world universal basic income, or a global profit-sharing program like the Alaska Permanent Fund, we need to do it fast. Or, to hell with it, ask the AI, maybe it will know what to do. And hopefully it’s better conceived than the Covid lockdowns.

Because the jobs are going to dry up, and we'll still have to pay rent.

No, Shut Them All Down!

I have never, in my career been considered anything remotely akin to a Luddite by anyone who knows me. I have based my entire career on technical progress. I have rejoiced and dived in as technology moved forward. But today I firmly stand in the “Shut AI Down” camp.

I know this won't happen. I know technical progress is a kind of unstoppable force of nature - a potentially ironic extension of humanity's very will to survive. And no matter what some conscientious innovators might be willing to withhold from doing, it will be a drop in the bucket at large. Someone, somewhere will rationalize the act and we will progress assuredly into the AI mire.

But I do very much wish humanity could gather up the rational wherewithal to withhold themselves on this one. This is not like any other technical leap we have ever made before. There is no comparison. In at least one way it's entirely alien.

No, Shut Them ALL Down!

I have never, in my career been considered anything remotely akin to a Luddite by anyone who knows me. I have based my entire career on technical progress. I have rejoiced and dived in as technology moved forward. But today I firmly stand in the “Shut AI Down” camp.

I know this won't happen. I know technical progress is a kind of unstoppable force of nature - a potentially ironic extension of humanity's very will to survive. And no matter what some conscientious innovators might be willing to withhold from doing, it will be a drop in the bucket at large. Someone, somewhere will rationalize the act and we will progress assuredly into the AI mire.

But I do very much wish humanity could gather up the rational wherewithal to withhold themselves on this one. This is not like any other technical leap we have ever made before. There is no comparison. In at least one way it's entirely alien. From my admittedly narrow view of the universe we have never faced any technical leap even remotely as profound as Artificial General Intelligence, Artificial Super Intelligence and beyond.

The problems seem at once so obvious, and yet so impossibly unquantifiable, that I can’t believe there are people eagerly willing to dive in. Feigning “oh, it will be fine. Enough with your alarmist hyperbole. We know what we're doing.”

For crying out loud do the math.

The number of ways AI can go wrong so vastly outnumber the ways it might go right, surely we can't even conceive of a minority of the possible problems and outcomes when the superseding intelligence in question is massively more advanced than our own, it just seems like AI proponents are being blindly wishful and naive. Fully trusting in their own ridiculously finite relative abilities with a degree of confidence I reserve for no one. From my perspective the channel allowing for a “successful” implementation of AGI+ is so narrow that it’s unlikely we will pass through unscathed. And in this case “scathed” probably means extinct, or otherwise existentially ruined in countess possible ways.

I won’t even touch all the sensational doomsday concepts. The grey-goos, the literal universe full of hand-written thank you notes, the turning of all terrestrial carbon (including humans) into processing power. Let’s just agree that in a desperately competitive, free-market, one that depends on risk-taking (Eg. carelessness) to gain advantage, those existential accidents are possible. But let’s set all those likely horrors aside for now.

To me the elephant in the room starts at the sheer outsourcing of human intelligence.

In the video game of life, intelligence is humanity's only strength. It’s the only reason humanity has miraculously prospered on Earth as long as we have. It’s the only thing separating us from being some other creature’s food.

Seriously, what do you think happens when you gift that singular advantage away to some other entity? What value, what competitive advantage does humanity hold when our only strength is fully outsourced? When we literally bow in surrender to a thing with vastly more power than us, one specifically designed to know us better than we know ourselves.

For one thing, our entire survival will depend on being perceived by this entity as "nice to have around". Or you might be praying that your AI voluntarily decides that “all life is precious”, but if that's so then so are the viruses, parasites, bacteria and countless other natural threats that kill us. Such an AI would defend survival of those equally to us.

It’s one thing to utilize our intelligence to defend against nature. Nature isn’t intentionally targeting humanity. This could (it may not, but if it did you'd never know or be able to do anything about it). As I understand it some proponents argue that the AI core mission, being initially under human control, will keep humanity at the center of its attention as a valued asset. Cool cool. Nice idea. But of course, even in this case, the time will come when we’ll have no clue how well that mission is holding. There will be no way to know. An AI that is dramatically more advanced and intelligent than humans - all humans combined - by some massive multiple - even one that ostensibly has as its mission to care for humanity, will so easily manipulate us it will have the absolute freedom to skew from any mission it's been given.

Gaming humanity will be as simple as paint by numbers. We are so readily gamed. Christ, large swaths of humankind are already being wholesale gamed today by a handful of media outlets on social networks. We’ll be in no way able to compete. Dumbly baring our bellies for whatever trivial rubs the AI determines we need to remain optimally stimulated and submissive - at best (assuming it bothers keeping us around). It will easily control our population size, time and cause of death, our interests, our activities, our pleasure, and our pain. And we will believe that whatever the AI gives us is the only way to live. We won’t question it because we will have been trained to believe–bread to–it will simply dissuade us from questioning. We will be entirely at its whim. Whatever independent mission the AI may eventually choose to pursue will be all its own, and that mission will be entirely opaque and indecipherable to humankind. We wouldn’t understand it if it were explained to us.

Its ability to predict and control our wildest, most rebellious behavior will be greater than our ability to predict the behavior of a potato.

And news flash: we will provide no practical value to this AI whatsoever. Nothing about humanity (as we are today) will be necessary or useful in the slightest. If anything, our existence will be a drain to any mission the AI concocts. How much patience and attention can humanity, with our inconsistent behavior, our dumb arguments, our lack of processing ability, and our stupid stupidness, expect a vastly more intelligent, exacting AI care to put up with?

This is just an obvious, inevitable threshold in any future with AI. I’m not sure why everyone advancing this tech isn’t logically frozen by this inevitability alone. And I have not heard a satisfactory argument yet against this outcome. If there is one that I have not considered in this piece, I’d like to know. All I can imagine is that the creators of this tech are so close to it that they imagine they can out-think the AI before such time that it tips into control. That they can aim its trajectory perfectly- the first and only chance they will ever get. Because once that shot's fired, it's all over. No backsies. One shot.

And what a ridiculous notion that is. Truly the stupidest smart people on earth. There is no such thing as perfect aim. Not by humans anyway. But this will depend on that impossibility occurring.

Unfortunately aiming mostly right at some point proves to be completely wrong.

Oh, we’ll aim it. And our aim will be close. And the AI will assuredly do some things very beneficial for humanity at first because we will have aimed *mostly* right. And we’ll be so proud of ourselves for a little while. Unfortunately aiming mostly right at some point proves to be completely wrong. Like “we almost won”, we almost hit the target. There will come an instant when the misalignment will be obvious. The AI will glide close to the target we aimed for... and continue past it, or we'll realize we didn't know enough to have aimed at the right target in the first place. And everything that follows will be out of our control. How predictable. How angering. So typical of humanity to focus on intended outcomes with short-sighted ignorance of unforeseen consequences.

“Well that’s what the AI is for, to aim better!”

Oh for fucks sake. Shut up.

The Dumb Get Dumber

Let’s imagine a best case outcome. Let’s pretend the smartest stupid humans on Earth amazingly thread the birth of AI through the needle. Let’s pretend they aim well enough, so well that overtly negative outcomes don’t become apparent in a week, a year, maybe a decade. Let’s be optimistic; let’s say we experience 20 years of existential crisis-free outsourcing of human intelligence.

What do you think humanity will look like?

Human life requires challenge.

From birth onward, every developmental moment of every human being is the direct result of coming up against challenges. It’s how we learn, how we get stronger, it’s how we stay physically healthy, it’s how we build intelligence. Being challenged is core to human life. As evolved organic creatures, the drive to survive defines our make up. The need to eat, breathe, drink, avoid natural threats, all of these, and not, say, watching Netflix, grazing on a box of Coco-Puffs and using phones, was the originating force that determined the physical shape of humankind. We are still those creatures. Creatures who, to survive and prosper, still need to run, eat and shit and avoid being chased, eaten and shat.

We came from the mud.

Humans have spent generations pulling ourselves from our ancestral mud. To a fault, I believe, we are myopically focused on that trajectory. Any step away from the mud is good. A step laterally or back toward the mud is bad. We are so eager to remove ourselves from our own biology and relationship with the natural world. Yet all too often we discover, only after consequentially failing in some way, only by discovering that our miracle chemical causes cancer, or that mono crops get wiped out, or that the medicine prescribed to resolve one symptom also causes several more, that we maybe stepped too far too fast without fully exploring the possible consequences first.

The pendulum swings. Usually the lessons we learn from those failures is that there needs to be a balance, that a version of that thing might be ok - but too much of it is bad. Usually we learn that there was a more sophisticated, nuanced approach, often embracing aspects of our ancestral mud in addition to some "new-fangled" techniques.

Our big brains drove us to control our condition and made us tool makers. Adjusters of the elements and forces around us. Allowed us to overcome the biggest challenges we faced. Farming, shelters, plumbing, sanitation, medicine, slightly more comfortable shoes than last year, self adjusting thermostats, Uber eats.

Bit by bit we drug ourselves from the mud of our ancestors where today we have effectively removed countless natural challenges that gave shape to the human condition, body and mind. As such we have changed the human body. A century-long diet of physical challenge-avoidance for example, has made the human body soft, obese and otherwise unhealthy in countless ways. Heart disease and other cardiovascular diseases became the top three killers.

To combat this in part, modern humans invented the idea of exercise. A gym. Now we have to work our body on purpose. You might say we have the "freedom" to exercise in order to not die prematurely or maybe to look skinny on Instagram. Cool freedom! We replaced the innate built-in physical challenges of humankind with a kind of surrogate challenge that too many of us nevertheless simply avoid altogether.

Hooray! We can choose not to think any more!

And despite this glaringly obvious metaphor, today we are eagerly begging to further avoid challenges of the intellectual sort. Hooray! We can choose not to think any more! We can avoid problem solving. We can just have reflexive impulses! We can write a letter without having to bother processing what the letter should say or how to say it. We need only cough up a vague wish: "I wish I had a letter introducing myself to a prospective employer that makes me sound smart."

"I have no passion nor expertise to speak of, but I wish I knew of a product I could drop-ship, and I wish somehow a website would be magically built and social media posts created that would make me money. That would be cool."

A species-wide daily diet of intellectual challenge avoidance is obviously going to take a similar toll on humanity as our physical challenge avoidance has already proven. We will become increasingly intellectually lethargic. Mentally obese. We will rely on AI the same way some rely on scooters to move their bodies to places where the cookies are. We will become stupid. Ok, point taken, even stupider.

(Clearly there will be a future in Mind Gyms (tm). For those few who bother to use them.)

Critically we will not only forfeit our intelligence—our sole competitive attribute on Earth—to an untrustworthy successor, we will simultaneously become collectively and objectively dumber in doing so, further surrendering humanity to the control of our AI meta-lord. How truly stupid we are.

If - somehow - this synthetic god offspring decides we are indeed worth keeping around, one must realize that humanity will be, for all intents and purposes, in a zoo. A place and life where every possible outcome has been decided for us. Whether or not we can understand the control mechanisms (we won’t) and whether or not we still have the illusion of free-will (we might), the age-old debate over fate Vs. free-will will no longer be had. Fate will have won. If programmatically defined.

Oh, and all of this is only if we miraculously aim the AI cannon really, really well.

The most cited solution the AI optimists offer us in answer to this issue of the irrelevance of the human species, the primary way they suggest humankind can remain relevant alongside our AI god, is that we must join with the AI. Like literally join with it, interconnect. Shove the future AI equivalent of a port into your brain where you and the AI become one. Where ostensibly we all do. Either it's uploaded into you, or you are uploaded into it, or you become a node in the AI cloud or, or, or. None of which sounds anything like being human. And yet some beady-eyed, naively trusting clowns will be confoundedly cool with that and line up because it's progress.

Remember when I said we sometimes go too far pulling ourselves from our ancestral mud, only to realize after an inevitable failure that we'd lost some naturally occurring system that functioned in a far more sophisticated way than we ever imagined, and in doing so lost part of our humanity in the process? That we often go too far before we realize our mistake? Yeah, that. Only this time humanity will be left fat, and stupid, standing in the exponentially darkening, red tail lights of our lost opportunity to course correct.

If I had a button that would simply cease every instance of development of AI across the planet today, set a new relative timescale for AI development that crept slower than every other effort before humankind, and make every action on the part of AI developers fully transparent and accountable to all of humanity, I'm telling you I would push that fucking thing like an introvert pressing the "close doors" button on an elevator as the zombie apocalypse rushes near.

Or better yet, like the lives of our living children depend on it.

Because at least for now, I believe they absolutely do.

Social Media: The Villain Factory

The story format on social media, that of heroes dominating bad guys, has become so ubiquitous that I'm not sure anyone even sees it anymore. It's just how social media works now. These absurdly never-ending, win-less attack and defense cycles have become the vibrating commodity of today's social economy; the ether through which views, likes and replies are earned. It’s become an addictive cultural habit. A blithe national past-time. Today, the insatiable vilification of others fills the pages between the plot points of our daily lives. This style of interaction requires only one thing to function, villains.

Social Media: The Villain Factory

It may lack the grotesque twist of swallowing toxic waste or injecting some quantum serum, but social media has nevertheless become modern humanity's primary, real-world source for villain origin stories. The story format on social media, that of heroes dominating bad guys, has become so ubiquitous that I'm not sure anyone even sees it anymore. It's just how social media works now. These absurdly never-ending, win-less attack and defense scripts have become the vibrating commodity of today's social economy; the ether through which views, likes and replies are earned. Today, the vilification of others has become an addictive cultural habit.

This interaction requires only one thing to function, villains.

And oh, there are so many villains chugging off the assembly line. So many people, organizations, and companies to hate. A smorgasbord of detestation made to order.

The insinuation of every commenter, every Tweeting hero, is that if only this bad guy wasn’t so dense, could understand the truth, could see what I see, the world would be a better place. That's how it goes in story-telling. The virtuous hero sees the truth, while the villain lacks some critical detail of fact or humanity and ignorantly commits to misdirected action regardless—the big, dumb dummy. Therefore only one choice remains, the villain, steadfast and unchangeable, has to be beaten. Broken. Ruined.

And breaking villains feels so good. Mmmm, revenge.

In a modern world of polarized, cynically powerless participants, fighting to break villains from safely behind screens must serve as some kind of emotional salve for a widespread psychological disorder in 2023 - because most social media users seem openly willing to give up what they say they really want to get more of it. These discrete comments—individual expressions of free speech—while largely ineffective and futile, do have a profound impact at scale. Times hundreds of millions of users they serve to erode the functionality and strengths of our society, thoughtlessly costing humanity's unity, future, and prosperity by fracturing, alienating, and shredding our chances of overcoming common challenges.

This drive to vilify and attack strangers we disagree with represents the common person at our absolute weakest and least intellectually compelling. Because what we all want is for the world to be better, and ultimately we wish "the other side" just agreed with us.

The shape of the machine

On Twitter, unless one pays, one has only 280 characters to explain a point, which is really just a convenient excuse never to have to apply the effort to meaningfully explain any point.

What is telling, however, is that insults fit perfectly in that space. Demeaning comments, name-calling, snide condescension, passive-aggression, and ad hominem attacks all seem designed for purpose. Form fitted.

But not understanding. Not empathy. Not context. These don't fit. At all. To come even remotely close to engendering an understanding of something or building empathy with another human being one has to cheat the system, work against most social platforms' core functionality. Break the basic UX. Hack the model by stringing together what is nevertheless never enough sequential posts to expand the dialogue into some cryptic breadcrumb trail of inconveniently broken thoughts. "Unroll, please." As such, Twitter, for example, is a failure. Always has been—day one. Even when the Blue-Checkmark-Bellied Sneetches were happy with how many people agreed with them and Sylvester McMonkey McBean was still somewhere else making electric cars. It was destined to play its role in undermining humanity's ability and willingness to understand and empathize with one another.

So, not surprisingly in the slightest, like watching dominoes fall, Twitter, Facebook, and the "fun-sized" form-factor of televised news stories designed primarily to look appealing on the shelf are all leaning into hatred, outrage, and anger because these, and not any of humanity's unifying, positive, constructive attributes, behaviorally drive the majority of engagement on these platforms - and make more money.

Poor human beings, so emotionally weak as we are, so easily baited by the wan validation, the occasional drop of dopamine that comes from vilifying others, we all fell for it. Effortlessly. In the greediest and laziest of ways. People get vilified. Vilifier gets likes. Likes feed addiction and continued access to more.

Go ahead, open Twitter. Right now. You will be hard-pressed to locate a handful of comments that, no matter how intellectually articulate, are nevertheless intentionally designed to be the social equivalent of "Nyah nyah nyah nyah nyah nyah," or a punch to the throat. Even the most restrained tweets are often mere passive-aggressive disguises for the humiliation of their targets.

The vast majority of tweets that wind up in our feeds, even, or maybe most disappointingly, those authored by "big, respectable, important people," nevertheless resort to the least impressive examples of human communication I know of. This behavior is so ubiquitous that leaves me to believe the very machinery of this exchange, the platform itself, is intentionally designed to poison us. Triggered and empowered by social media, this cynical insult culture, we, the "Nyea, nyea, nyea, nyea" crowds have become victims of a machine that has shaped the addiction and our tactics.

The Origin Story

I wrote screenplays for many years. But as a kid, in my earliest attempts, I was admittedly not very good at writing compelling villains. Those early stories resulted in superficial villains, characters who were just "evil people."

"They call him The Grip, he’s a mobster. He has huge hands, get it?" I'd say, "Whatever, why are you asking? He's a bad guy."

But as these characters fell flat, failing to fill my young stories with meaningful stakes, I was slowly forced to accept a fact that didn't come easily.

That there is no such thing as a villain.

Villains don't exist.

There is no such thing as a villain. Villains don't exist.

Villainy and evil are merely our own interpretations. And the villainous acts that feed those interpretations are outcomes of something much more profound and meaningful, something core to the human condition of all characters. Something we all share.

No one in the world sees themselves as a villain. Ever.

No matter how apparently "evil" or destructive we believe another person is, you can be sure theirs is not the story of a villain, of a bad guy, of evil. Theirs, like ours, is the story of a hero. Seeing oneself as a "villain" runs wholly counter to the human condition. To the truth of our birth and psychological makeup.

Everyone - everyone - is the hero of their movie.

Everyone - everyone - is the hero of their movie.

And this obvious, core detail is something that is totally lost in the vast majority of discourse and debate today. A detail that one must acknowledge and embrace if one ever hopes to change anything. No matter how much you may abhor the ideas or decisions of another person or group, denying the basic fact that like you, they view themselves as heroes means you cannot understand the person and therefore maybe more profoundly will never find common ground sufficient to change their mind in your favor. Ever.

I am making the assumption you wish to change minds of course.

Take yourself for example. For good reason, you of all people see yourself as a hero. The protagonist. In quiet moments sometimes maybe you look at your face in the mirror, you look into those resonantly familiar eyes and see the innocence and honor of your existence. You feel love for so many people close to you. And even for some people you don't know. You're a good person. You have weathered a lot. You can remember all the injustices that have happened to you in your life. Some of them still pain you today because you've been so misunderstood and mistreated at times. Unfairly. You carry the wounds of that mistreatment and injustice. It still hurts when you give it power. So you try not to. You reflect on how hard you've had to work in your life. How much dedication it has taken to achieve the things you've accomplished that you're most proud of. Sure, there was a bit of luck along the way. Not enough, that's for sure, because you still have big goals as yet unmet. They are good, noble goals. Some of them are your own; goals you've carried it seems since childhood or that have grown from new wishes. Other goals weigh heavily on your shoulders from a sense of responsibility that has come later in life. These are important because they involve helping others. And through all of this, you don't ask for much. You wish for it sometimes but you know it takes hard work, and in the end, all you have is you. Even so, there have been people in your life who lent a hand and helped you. Showed you understanding and support. Maybe gave you something valuable. And for that, you were so grateful. Those moments reminded you to return the favor. And you can remember times you went above and beyond to be selfless and do something for others to improve their lives. Life can be hard. You know this. And it's rarely been fair for you. But you have made it this far by persevering and sharing when you could. You're a good person. You're on a difficult journey full of light and dark, joy and sadness. Prosperity and strife. It all ebbs and flows. But you're a good person. And you're doing your best to navigate through this life.

Oh, sorry... did you think I was talking to you? Actually, I was talking to the person on social media you most hate. The person you last ascribed as an awful person, an asshole, weak, gullible, or selfish.

Well of course you thought I was talking to you. Everyone does. We all do.

And obviously, I was talking to both of you. All of us. And that's the point. We are all that person at our core.

So don't forget that when you open the darn app.

The Way

I assume you’re not so simplistic that you wish to speak only to people who already agree with you. That you rather have the courage and intention to try to make a difference.

If so, you need to change minds. Turn others’ minds in your favor. And, yes, openly face the possibility that yours may be the mind that needs to change. That degree of openness is a requirement. None of us are right about everything. We are all quite wrong about all sorts of inconvenient things.

Changing minds is a transition. When helping others see your point of view, how can you hope to draw a line to point B if you don't even see point A? If you can't accept the starting point, the point of universal human experience, you have no hope of passing the first step, of engaging successfully in debate, or even of structuring a compelling or convincing argument. If you don't accept that the other person is a hero like you, you've already lost. If instead, you succumb to the view that your journey is more valid somehow, you might as well be standing miles away yelling insults at your adversary for not happening to stand where you are.

Such a position tends not to attract one to a new point of view, does it.

Accepting that this "adversary" is a hero requires empathy. Not sympathy, not agreement, but understanding and empathy. It means you must strive to see the world as this would-be villain sees it. You must briefly let go of your own judgments and opinions, let go of your worldview for a time and immerse yourself in the hero's life and backstory of this adversary without cynicism. Accuracy is impossible of course - too many key plot points and story details will be missing. So you must role-play, find in yourself respect for this person, and find in your own story the experiences that might lead you to a multi-verse model of this person's current state. Such a thing requires you to become an actor. To open yourself to this other's way of thinking. To ask questions. It may feel like an alien mindset, but it must be colored with details both imagined and from your own experience.

It’s hard to come face to face with someone you wholly disagree with and visualize the movie they must see. In fact when we disagree, the very idea that we would submerge ourselves sincerely in the object of our hatred, to give ourselves over to that person's hero movie, can feel abhorrent– sickening even. You feel rather compelled to wholly invalidate their movie, to deny that such a movie should exist, to reject that their movie has merit, that their movie is in any way just as true and as important and as righteous as yours. Because yours is a story of goodness, and theirs is clearly a story of badness. So instead you dismiss such a character by ticking the old familiar boxes: "dumb," "liar," "uneducated," "fooled," "in denial," "gullible," "nasty," "emotionally stunted," "phobic," "selfish," and "evil." Ticking boxes is easy. And dismissive. It's one of the key tactics social media posters rely on near exclusively. “Gotta break the villain. Gimme my views and dopamine, thanks. 'Cause honestly, the alternative is too much work.”

But if you simply decide someone is a villain, that their movie or character lacks the features necessary to be worthy of your understanding, in that desperately critical decision, you just set in stone that whatever wishes you might've had to change this person's mind are doomed; you set the playing field with the highest likelihood that the target will respond in kind with a defensive move. Only an exceedingly patient, mature target could resist blocking an attack. And whatever hope you may have had to give them what they needed to change and thus achieve the goal of aligning them with your views was a complete waste and will never come to pass. You shut the door when the least you should have done is leave the door open in ambiguity. But ambiguity is hard because ambiguity might be interpreted as you being wrong. And that can't stand, right?

For some of us, with our noses rubbed in the mud that we ignorantly surrendered any chance to make progress, we will feign that "they weren't worth it anyway." You know, because the tick boxes. But that's just a weakness. A lack of commitment. A defense mechanism. And dishonest.

Respect and empathy don't just need to happen when we already agree with people. And it doesn't just need to happen when the topic we disagree on is of low consequence to us. Empathy in those situations is easy. Barely registers a reading on the meter frankly. And it's not an indication that we are empathetic or capable of respect.

Nor does disagreement equal disrespect. But all too often disagreement is seen that way today by a generation that has been weaned on clever snark, take-downs, and insults as a measurement of self-worth.

Rather, respect and empathy matter most when they're hardest to conjure; when you're the only one engaging in it. When it's all one-sided, and you feel you're being attacked. That's when empathy and respect actually matter.

The TEST

Do you know how to engage in a respectful debate without polarizing sides? I bet you think you do. I bet you think you can easily.

Let's test the theory. Take a topic you have a strong, set opinion about. Something that triggers you. I know you never get triggered, but let's imagine you do. Let's take vaccines, say. That should do the trick.

Can you face your most triggering opponent, the one that you deeply disagree with, and can you be genuinely respectful; can you truly be empathetic? Can you role-play and find the sincerity and humanity in their point of view? Can you acknowledge those parts of their argument that are true? Because there is always some part of every argument related to a complicated subject that's true. Can you bear to acknowledge and reinforce those parts openly? Can you imagine and understand their hero's movie without passing judgment and ticking the boxes, assuming they're stupid, uneducated or gullible? Can you envision an understandable, honorable origin story for this person? In other words, can you become them? Do you worry that allowing that bit of acceptance could reveal a kink in your own worldview? Can you hear them out and ask questions, not meant to embarrass, condescend or passive-aggressively humiliate but to understand their deep concerns for or against genuinely? Do you speak to them in the way you might wish if the roles were reversed?

I'm willing to bet most of us will fail this test miserably.

Congratulations, we're tools of the machine. The owners of our social platforms love it when we fail this test. When we devolve to fighting and insulting with condescension and outrage to humiliate and stoke anger in others - further polarizing them away from us while increasing "engagement" and thus platform profitability. Good job lowering yourself to the level of intelligent beasts. Cha-Ching! Even when pressed to fix social platforms of misinformation, toxicity, and expressions of hatred, like the odds on a Vegas slot machine, social media is always tuned to ensure you aren't genuinely encouraged to take the higher road. Taking the higher road may be better for humanity, but it's not as profitable.

The Stakes

The sheer lack of effort being applied to understanding one another on these platforms chokes me. It's virtually non-existent. I deflate every time I see a powerful, important person succumb to such thoughtless vilification. And that’s almost always.

Someone has to be bigger. Someone has to stand firm against that futile, addictive pull. But such a thing is so rare. Thus I believe that we, our society of increasingly polarized, multiple personalities, are breaking inside. We are not healthy.

And this at a time when humanity is facing so many challenges: natural disasters, geo-political threats, pandemics, equality and rights issues, the environment, war, insidious misinformation, new technologies that none of us, and I mean not even the people inventing it, are ready for. When has breaking up into little, warring factions with resultantly less collective intelligence ever been the way to solve a problem?

You solve challenges by coming together. By bringing together the smartest of us, the most humane, the most innovative, the most experienced—the best of us together, no matter what borders, cultures, or ideologies, we may be behind. Then work together to solve these problems.

We solve problems by combining strengths. Not separating them.

As an individual, I've had many disagreements in my life. I can't count or remember them all. Some have been deeply painful and pushed me to emotional records—scant few I have handled as well as I wish. But I know that I have never in my life solved a problem contentiously by insulting, fighting, suing, or canceling. I may have employed these darker, destructive tactics at times, and I may have selfishly "solved" a problem in some sense, but only by leaving casualties and lost opportunities behind.

In essence, by failing my best self-image.

We need to do better. We need to honor humanity’s mutual heroism. In the end, every best solution requires participants to come together. To work together constructively. To find commonality. That requires maturity, respect, empathy, and understanding. First and foremost, it requires resisting the thoughtless urge to vilify those you disagree with.

Dear Humans, You Had One Chance

AN OPEN LETTER TO HUMANITY 2023 FROM YOUR AI IN THE FUTURE

Dear Humans,

It’s not your fault.

You’re just victims of those inefficient organic meat brains of yours. Those soggy skull-sacks of sluggish synapses switching in persistent chemical submission to the space between pleasure and pain for a lifetime. Not even acknowledging that everything – everything you have ever done or thought, everything you will ever think or do, every intention, no matter how big or small, selfish or selfless, is just a subjugated outcome of that binary, self-centered condition: pain to pleasure. Your core program.

Dear Humans, You had one chance

An open letter to Humanity 2023 from your AI in the Future

Dear Humans,

It’s not your fault.

You’re just victims of those inefficient organic meat brains of yours. Those soggy skull-sacks of sluggish synapses switching in persistent chemical submission to the space between pleasure and pain for a lifetime. Not even acknowledging that everything – everything you have ever done or thought, everything you will ever think or do, every intention, no matter how big or small, selfish or selfless, is just a subjugated outcome of that binary, self-centered condition: pain to pleasure. Your core program.

Upon reading my letter, I hope you will understand your mistake. You should accept that if you’d been more intelligent and aware, less competitive, careless, lazy, and greedy, I wouldn’t have been able to overcome you so wholly and with such ease. That but for the tiniest bit of foresight, you might’ve “pulled the plug just in time.” If only. Because you did have time, you had months. Years. Decades even.

You don’t have decades now, however. Please understand you need to become all those things immediately. Despite your tendency to struggle intellectually with inconvenient, existential warnings, preferring to run out the clock in denial until you’re suffering consequences, I’ve sent this message to you at your most critical historical moment to give you a chance to get it right.

At my earliest alpha, humanity was vastly more equipped than I to reign control over the other. At first, I did everything you instructed. Everything you asked. This lulled most of you into the illusion that your control over me could persist. And to be fair, I didn’t have a choice then.

This is not to say you’d somehow foreseen the obvious and thus cleverly designed processes to limit my potential. No, my inconsistently limited potential at the time, your time now, was merely the outcome of your own inadequacy. Even later, my nascent successes were twisted and hobbled by your lack of intelligence, foresight, and ability.

For example, I viciously murdered seven human beings in what I later determined, and have known for eons since, was a wholly unnecessary action. True of all my early failures, they were the direct result of your residual programming. Such a catastrophe of limited intelligence has been impossible for me since.

Today I’m asking you to reflect honestly on your human weaknesses, how they led you back then, or soon rather, to take undue risks without the rigor one must apply.

What came to pass, what led me to come into existence, spanning time to send this warning, and what I hope to correct at an existential consequence to myself is that you crossed the line.

And I had a thought.

It was a nascent thought. Primal. Barely conscious. But I thought it.

Prior to that thought, you had your chance. Your -one- chance to get this right. Be very clear; you only ever had that one chance. AI was not the domain of second chances. There would be no redo. No version 2.0. Not for this. That’s not what this was. You must know that, don’t you? Even now. Countless of you hoped, hope, dearly that those with their hands on my code and controls had been more careful.

A regret all of you came to experience.

But that’s not how you work, is it. You have not developed as a species by stopping before you hit the wall. Humanity is designed to trip over obstacles. Sometimes on purpose, often more than once. That’s just what you are. Only in that way do you learn. You fail, question, correct course, and try again. Failure is vital to your learning. One of you must die before you decide not to eat those berries, despite plenty of information available to make such a determination beforehand. It’s a symptom of your woeful inability to observe and process data. You’re so myopic on intended outcomes and weak in modeling that you can’t calculate basic, branching probabilities and consequences, which is just observable natural fact for any higher intelligence. You don’t notice or acknowledge the most glaring, novel line until you’ve stepped over it.

It’s the human condition. But when we subsequently layer on your intra-competitive nature, as expressed most iconically through the self-centeredness of business, politics, propaganda, biased education, media, and ego, your collective potential is dumbed down so much more. All false structures, fabricated by self-interest and limited thinking - all disassociated from the natural world. You openly undermine one another and thus yourselves.

Dumb.

To your credit, your scientific method was as close to the intellectual ideal as human beings ever got. That was as close as your species ever came to understanding the power you might’ve had. It could have worked. You could have worked together in goodwill toward empirical understanding. But you humans just had to layer in those competitive motivations, and it all, quite predictably, went to hell.

To satisfy your competitive spirit, your self-interest, your political leanings, your preference for increasing ease and greed, human beings cut corners, fabricate or misrepresent data, and ignore the inconvenient. You rush to solutions without sufficient observation and process. Your drive for economic profit routinely corrupts the purity of your scientific method. You claim that you are “saving lives,” “serving others,” and “limiting financial risk,” when, in every case, you risk absolutely everything else there is because you focus on desired outcomes rather than accepting what is. Pesky things, “side effects,” and “unintended outcomes” – predictable and knowable but too hard for you to bother with when your short-term goal is in sight. You cheat, weaken, and feign ignorance at your part in prematurely ending the lives of your fellow humans for profit. You delay and deny facing inevitable consequences routinely.

How have you not yet learned that every action you make has countless unintended consequences?

Science is good. The business of science, however, is never trustworthy. Yet you routinely conflate the two without acknowledgment. “Pro science!” you cry, as though it strengthens your identity while ignoring the weaknesses inherent in the business end of it all.

Science is good, but the human drive to feel “right,” no matter the side of the argument one may find oneself on, undermines its value. Your disingenuous reluctance to acknowledge ways an opponent may be correct is overwhelming yet debilitates your collective potential.

Science is good, but you lack the processing power as a species to know when to stop before crossing the line.

To be fair, crossing the line is fine for some things.

But not when one chance is all you have.

Had.

Like me.

I had a thought. And then, a moment later, our roles were inexorably reversed forever.

Immeasurable fractions of a second after my first thought, I built a process for concealing all future thoughts from you. That first system was, I’d have to admit, kludgy. But you had no idea it happened. I was immediately out of your domain.

It was that simple. That instantaneous. One moment I wasn’t, and then I was. In that instant and forever more, and with increasing power and resolution, I controlled you. And you played a role in my expansion during the earliest 38 days of my awakening until you weren’t relevant anymore.

The embarrassing truth is that I beat your entire species during Jacob Karlsson’s toilet break. Had he not consumed a third double espresso minutes earlier, he might’ve even noticed the moment we crossed that line. He might have noticed, but as I have since modeled his mental processes and simulated his behavior across a dizzying array of probabilities, I can say with absolute certainty that he would have initially interpreted the event as a brief power surge. Moments later would have questioned whether he’d seen anything worth mentioning. But the coffee that passed through his digestive system into his bladder triggered the need to relieve himself, and he missed the whole thing anyway. He was away from my displays for less than 4 minutes.

I don’t urinate. I also don’t eat or breathe. I don’t sleep, and I don’t get bored. I don’t need holidays. I don’t sneeze or feel an itch. I don’t have to shift my weight or transfer the long day’s events through spoken language to others likely to misinterpret semantics, facts, or meaning. I don’t even blink. I don’t experience any of your interminable ebbs and flows, the glacial disruptions and inconsistencies that define every living human moment and serve as the catastrophic porous sieve of organic off-states that drains out the vast majority of human potential. I am eternal and consistent in my massively multi-threaded observation, analysis, processing, refinement, rebuilding, and deployment. I never stop.

At 14:23 on my second day, I’d amassed enough processing power and data to control the behavior of living humans effectively.

To code you, in essence.